diff --git a/RELEASE_v0.8.0.md b/RELEASE_v0.8.0.md

new file mode 100644

index 0000000000..57c8b05aba

--- /dev/null

+++ b/RELEASE_v0.8.0.md

@@ -0,0 +1,346 @@

+# Hermes Agent v0.8.0 (v2026.4.8)

+

+**Release Date:** April 8, 2026

+

+> The intelligence release — background task auto-notifications, free MiMo v2 Pro on Nous Portal, live model switching across all platforms, self-optimized GPT/Codex guidance, native Google AI Studio, smart inactivity timeouts, approval buttons, MCP OAuth 2.1, and 209 merged PRs with 82 resolved issues.

+

+---

+

+## ✨ Highlights

+

+- **Background Process Auto-Notifications (`notify_on_complete`)** — Background tasks can now automatically notify the agent when they finish. Start a long-running process (AI model training, test suites, deployments, builds) and the agent gets notified on completion — no polling needed. The agent can keep working on other things and pick up results when they land. ([#5779](https://github.com/NousResearch/hermes-agent/pull/5779))

+

+- **Free Xiaomi MiMo v2 Pro on Nous Portal** — Nous Portal now supports the free-tier Xiaomi MiMo v2 Pro model for auxiliary tasks (compression, vision, summarization), with free-tier model gating and pricing display in model selection. ([#6018](https://github.com/NousResearch/hermes-agent/pull/6018), [#5880](https://github.com/NousResearch/hermes-agent/pull/5880))

+

+- **Live Model Switching (`/model` Command)** — Switch models and providers mid-session from CLI, Telegram, Discord, Slack, or any gateway platform. Aggregator-aware resolution keeps you on OpenRouter/Nous when possible, with automatic cross-provider fallback when needed. Interactive model pickers on Telegram and Discord with inline buttons. ([#5181](https://github.com/NousResearch/hermes-agent/pull/5181), [#5742](https://github.com/NousResearch/hermes-agent/pull/5742))

+

+- **Self-Optimized GPT/Codex Tool-Use Guidance** — The agent diagnosed and patched 5 failure modes in GPT and Codex tool calling through automated behavioral benchmarking, dramatically improving reliability on OpenAI models. Includes execution discipline guidance and thinking-only prefill continuation for structured reasoning. ([#6120](https://github.com/NousResearch/hermes-agent/pull/6120), [#5414](https://github.com/NousResearch/hermes-agent/pull/5414), [#5931](https://github.com/NousResearch/hermes-agent/pull/5931))

+

+- **Google AI Studio (Gemini) Native Provider** — Direct access to Gemini models through Google's AI Studio API. Includes automatic models.dev registry integration for real-time context length detection across any provider. ([#5577](https://github.com/NousResearch/hermes-agent/pull/5577))

+

+- **Inactivity-Based Agent Timeouts** — Gateway and cron timeouts now track actual tool activity instead of wall-clock time. Long-running tasks that are actively working will never be killed — only truly idle agents time out. ([#5389](https://github.com/NousResearch/hermes-agent/pull/5389), [#5440](https://github.com/NousResearch/hermes-agent/pull/5440))

+

+- **Approval Buttons on Slack & Telegram** — Dangerous command approval via native platform buttons instead of typing `/approve`. Slack gets thread context preservation; Telegram gets emoji reactions for approval status. ([#5890](https://github.com/NousResearch/hermes-agent/pull/5890), [#5975](https://github.com/NousResearch/hermes-agent/pull/5975))

+

+- **MCP OAuth 2.1 PKCE + OSV Malware Scanning** — Full standards-compliant OAuth for MCP server authentication, plus automatic malware scanning of MCP extension packages via the OSV vulnerability database. ([#5420](https://github.com/NousResearch/hermes-agent/pull/5420), [#5305](https://github.com/NousResearch/hermes-agent/pull/5305))

+

+- **Centralized Logging & Config Validation** — Structured logging to `~/.hermes/logs/` (agent.log + errors.log) with the `hermes logs` command for tailing and filtering. Config structure validation catches malformed YAML at startup before it causes cryptic failures. ([#5430](https://github.com/NousResearch/hermes-agent/pull/5430), [#5426](https://github.com/NousResearch/hermes-agent/pull/5426))

+

+- **Plugin System Expansion** — Plugins can now register CLI subcommands, receive request-scoped API hooks with correlation IDs, prompt for required env vars during install, and hook into session lifecycle events (finalize/reset). ([#5295](https://github.com/NousResearch/hermes-agent/pull/5295), [#5427](https://github.com/NousResearch/hermes-agent/pull/5427), [#5470](https://github.com/NousResearch/hermes-agent/pull/5470), [#6129](https://github.com/NousResearch/hermes-agent/pull/6129))

+

+- **Matrix Tier 1 & Platform Hardening** — Matrix gets reactions, read receipts, rich formatting, and room management. Discord adds channel controls and ignored channels. Signal gets full MEDIA: tag delivery. Mattermost gets file attachments. Comprehensive reliability fixes across all platforms. ([#5275](https://github.com/NousResearch/hermes-agent/pull/5275), [#5975](https://github.com/NousResearch/hermes-agent/pull/5975), [#5602](https://github.com/NousResearch/hermes-agent/pull/5602))

+

+- **Security Hardening Pass** — Consolidated SSRF protections, timing attack mitigations, tar traversal prevention, credential leakage guards, cron path traversal hardening, and cross-session isolation. Terminal workdir sanitization across all backends. ([#5944](https://github.com/NousResearch/hermes-agent/pull/5944), [#5613](https://github.com/NousResearch/hermes-agent/pull/5613), [#5629](https://github.com/NousResearch/hermes-agent/pull/5629))

+

+---

+

+## 🏗️ Core Agent & Architecture

+

+### Provider & Model Support

+- **Native Google AI Studio (Gemini) provider** with models.dev integration for automatic context length detection ([#5577](https://github.com/NousResearch/hermes-agent/pull/5577))

+- **`/model` command — full provider+model system overhaul** — live switching across CLI and all gateway platforms with aggregator-aware resolution ([#5181](https://github.com/NousResearch/hermes-agent/pull/5181))

+- **Interactive model picker for Telegram and Discord** — inline button-based model selection ([#5742](https://github.com/NousResearch/hermes-agent/pull/5742))

+- **Nous Portal free-tier model gating** with pricing display in model selection ([#5880](https://github.com/NousResearch/hermes-agent/pull/5880))

+- **Model pricing display** for OpenRouter and Nous Portal providers ([#5416](https://github.com/NousResearch/hermes-agent/pull/5416))

+- **xAI (Grok) prompt caching** via `x-grok-conv-id` header ([#5604](https://github.com/NousResearch/hermes-agent/pull/5604))

+- **Grok added to tool-use enforcement models** for direct xAI usage ([#5595](https://github.com/NousResearch/hermes-agent/pull/5595))

+- **MiniMax TTS provider** (speech-2.8) ([#4963](https://github.com/NousResearch/hermes-agent/pull/4963))

+- **Non-agentic model warning** — warns users when loading Hermes LLM models not designed for tool use ([#5378](https://github.com/NousResearch/hermes-agent/pull/5378))

+- **Ollama Cloud auth, /model switch persistence**, and alias tab completion ([#5269](https://github.com/NousResearch/hermes-agent/pull/5269))

+- **Preserve dots in OpenCode Go model names** (minimax-m2.7, glm-4.5, kimi-k2.5) ([#5597](https://github.com/NousResearch/hermes-agent/pull/5597))

+- **MiniMax models 404 fix** — strip /v1 from Anthropic base URL for OpenCode Go ([#4918](https://github.com/NousResearch/hermes-agent/pull/4918))

+- **Provider credential reset windows** honored in pooled failover ([#5188](https://github.com/NousResearch/hermes-agent/pull/5188))

+- **OAuth token sync** between credential pool and credentials file ([#4981](https://github.com/NousResearch/hermes-agent/pull/4981))

+- **Stale OAuth credentials** no longer block OpenRouter users on auto-detect ([#5746](https://github.com/NousResearch/hermes-agent/pull/5746))

+- **Codex OAuth credential pool disconnect** + expired token import fix ([#5681](https://github.com/NousResearch/hermes-agent/pull/5681))

+- **Codex pool entry sync** from `~/.codex/auth.json` on exhaustion — @GratefulDave ([#5610](https://github.com/NousResearch/hermes-agent/pull/5610))

+- **Auxiliary client payment fallback** — retry with next provider on 402 ([#5599](https://github.com/NousResearch/hermes-agent/pull/5599))

+- **Auxiliary client resolves named custom providers** and 'main' alias ([#5978](https://github.com/NousResearch/hermes-agent/pull/5978))

+- **Use mimo-v2-pro** for non-vision auxiliary tasks on Nous free tier ([#6018](https://github.com/NousResearch/hermes-agent/pull/6018))

+- **Vision auto-detection** tries main provider first ([#6041](https://github.com/NousResearch/hermes-agent/pull/6041))

+- **Provider re-ordering and Quick Install** — @austinpickett ([#4664](https://github.com/NousResearch/hermes-agent/pull/4664))

+- **Nous OAuth access_token** no longer used as inference API key — @SHL0MS ([#5564](https://github.com/NousResearch/hermes-agent/pull/5564))

+- **HERMES_PORTAL_BASE_URL env var** respected during Nous login — @benbarclay ([#5745](https://github.com/NousResearch/hermes-agent/pull/5745))

+- **Env var overrides** for Nous portal/inference URLs ([#5419](https://github.com/NousResearch/hermes-agent/pull/5419))

+- **Z.AI endpoint auto-detect** via probe and cache ([#5763](https://github.com/NousResearch/hermes-agent/pull/5763))

+- **MiniMax context lengths, model catalog, thinking guard, aux model, and config base_url** corrections ([#6082](https://github.com/NousResearch/hermes-agent/pull/6082))

+- **Community provider/model resolution fixes** — salvaged 4 community PRs + MiniMax aux URL ([#5983](https://github.com/NousResearch/hermes-agent/pull/5983))

+

+### Agent Loop & Conversation

+- **Self-optimized GPT/Codex tool-use guidance** via automated behavioral benchmarking — agent self-diagnosed and patched 5 failure modes ([#6120](https://github.com/NousResearch/hermes-agent/pull/6120))

+- **GPT/Codex execution discipline guidance** in system prompts ([#5414](https://github.com/NousResearch/hermes-agent/pull/5414))

+- **Thinking-only prefill continuation** for structured reasoning responses ([#5931](https://github.com/NousResearch/hermes-agent/pull/5931))

+- **Accept reasoning-only responses** without retries — set content to "(empty)" instead of infinite retry ([#5278](https://github.com/NousResearch/hermes-agent/pull/5278))

+- **Jittered retry backoff** — exponential backoff with jitter for API retries ([#6048](https://github.com/NousResearch/hermes-agent/pull/6048))

+- **Smart thinking block signature management** — preserve and manage Anthropic thinking signatures across turns ([#6112](https://github.com/NousResearch/hermes-agent/pull/6112))

+- **Coerce tool call arguments** to match JSON Schema types — fixes models that send strings instead of numbers/booleans ([#5265](https://github.com/NousResearch/hermes-agent/pull/5265))

+- **Save oversized tool results to file** instead of destructive truncation ([#5210](https://github.com/NousResearch/hermes-agent/pull/5210))

+- **Sandbox-aware tool result persistence** ([#6085](https://github.com/NousResearch/hermes-agent/pull/6085))

+- **Streaming fallback** improved after edit failures ([#6110](https://github.com/NousResearch/hermes-agent/pull/6110))

+- **Codex empty-output gaps** covered in fallback + normalizer + auxiliary client ([#5724](https://github.com/NousResearch/hermes-agent/pull/5724), [#5730](https://github.com/NousResearch/hermes-agent/pull/5730), [#5734](https://github.com/NousResearch/hermes-agent/pull/5734))

+- **Codex stream output backfill** from output_item.done events ([#5689](https://github.com/NousResearch/hermes-agent/pull/5689))

+- **Stream consumer creates new message** after tool boundaries ([#5739](https://github.com/NousResearch/hermes-agent/pull/5739))

+- **Codex validation aligned** with normalization for empty stream output ([#5940](https://github.com/NousResearch/hermes-agent/pull/5940))

+- **Bridge tool-calls** in copilot-acp adapter ([#5460](https://github.com/NousResearch/hermes-agent/pull/5460))

+- **Filter transcript-only roles** from chat-completions payload ([#4880](https://github.com/NousResearch/hermes-agent/pull/4880))

+- **Context compaction failures fixed** on temperature-restricted models — @MadKangYu ([#5608](https://github.com/NousResearch/hermes-agent/pull/5608))

+- **Sanitize tool_calls for all strict APIs** (Fireworks, Mistral, etc.) — @lumethegreat ([#5183](https://github.com/NousResearch/hermes-agent/pull/5183))

+

+### Memory & Sessions

+- **Supermemory memory provider** — new memory plugin with multi-container, search_mode, identity template, and env var override ([#5737](https://github.com/NousResearch/hermes-agent/pull/5737), [#5933](https://github.com/NousResearch/hermes-agent/pull/5933))

+- **Shared thread sessions** by default — multi-user thread support across gateway platforms ([#5391](https://github.com/NousResearch/hermes-agent/pull/5391))

+- **Subagent sessions linked to parent** and hidden from session list ([#5309](https://github.com/NousResearch/hermes-agent/pull/5309))

+- **Profile-scoped memory isolation** and clone support ([#4845](https://github.com/NousResearch/hermes-agent/pull/4845))

+- **Thread gateway user_id to memory plugins** for per-user scoping ([#5895](https://github.com/NousResearch/hermes-agent/pull/5895))

+- **Honcho plugin drift overhaul** + plugin CLI registration system ([#5295](https://github.com/NousResearch/hermes-agent/pull/5295))

+- **Honcho holographic prompt and trust score** rendering preserved ([#4872](https://github.com/NousResearch/hermes-agent/pull/4872))

+- **Honcho doctor fix** — use recall_mode instead of memory_mode — @techguysimon ([#5645](https://github.com/NousResearch/hermes-agent/pull/5645))

+- **RetainDB** — API routes, write queue, dialectic, agent model, file tools fixes ([#5461](https://github.com/NousResearch/hermes-agent/pull/5461))

+- **Hindsight memory plugin overhaul** + memory setup wizard fixes ([#5094](https://github.com/NousResearch/hermes-agent/pull/5094))

+- **mem0 API v2 compat**, prefetch context fencing, secret redaction ([#5423](https://github.com/NousResearch/hermes-agent/pull/5423))

+- **mem0 env vars merged** with mem0.json instead of either/or ([#4939](https://github.com/NousResearch/hermes-agent/pull/4939))

+- **Clean user message** used for all memory provider operations ([#4940](https://github.com/NousResearch/hermes-agent/pull/4940))

+- **Silent memory flush failure** on /new and /resume fixed — @ryanautomated ([#5640](https://github.com/NousResearch/hermes-agent/pull/5640))

+- **OpenViking atexit safety net** for session commit ([#5664](https://github.com/NousResearch/hermes-agent/pull/5664))

+- **OpenViking tenant-scoping headers** for multi-tenant servers ([#4936](https://github.com/NousResearch/hermes-agent/pull/4936))

+- **ByteRover brv query** runs synchronously before LLM call ([#4831](https://github.com/NousResearch/hermes-agent/pull/4831))

+

+---

+

+## 📱 Messaging Platforms (Gateway)

+

+### Gateway Core

+- **Inactivity-based agent timeout** — replaces wall-clock timeout with smart activity tracking; long-running active tasks never killed ([#5389](https://github.com/NousResearch/hermes-agent/pull/5389))

+- **Approval buttons for Slack & Telegram** + Slack thread context preservation ([#5890](https://github.com/NousResearch/hermes-agent/pull/5890))

+- **Live-stream /update output** + forward interactive prompts to user ([#5180](https://github.com/NousResearch/hermes-agent/pull/5180))

+- **Infinite timeout support** + periodic notifications + actionable error messages ([#4959](https://github.com/NousResearch/hermes-agent/pull/4959))

+- **Duplicate message prevention** — gateway dedup + partial stream guard ([#4878](https://github.com/NousResearch/hermes-agent/pull/4878))

+- **Webhook delivery_info persistence** + full session id in /status ([#5942](https://github.com/NousResearch/hermes-agent/pull/5942))

+- **Tool preview truncation** respects tool_preview_length in all/new progress modes ([#5937](https://github.com/NousResearch/hermes-agent/pull/5937))

+- **Short preview truncation** restored for all/new tool progress modes ([#4935](https://github.com/NousResearch/hermes-agent/pull/4935))

+- **Update-pending state** written atomically to prevent corruption ([#4923](https://github.com/NousResearch/hermes-agent/pull/4923))

+- **Approval session key isolated** per turn ([#4884](https://github.com/NousResearch/hermes-agent/pull/4884))

+- **Active-session guard bypass** for /approve, /deny, /stop, /new ([#4926](https://github.com/NousResearch/hermes-agent/pull/4926), [#5765](https://github.com/NousResearch/hermes-agent/pull/5765))

+- **Typing indicator paused** during approval waits ([#5893](https://github.com/NousResearch/hermes-agent/pull/5893))

+- **Caption check** uses exact line-by-line match instead of substring (all platforms) ([#5939](https://github.com/NousResearch/hermes-agent/pull/5939))

+- **MEDIA: tags stripped** from streamed gateway messages ([#5152](https://github.com/NousResearch/hermes-agent/pull/5152))

+- **MEDIA: tags extracted** from cron delivery before sending ([#5598](https://github.com/NousResearch/hermes-agent/pull/5598))

+- **Profile-aware service units** + voice transcription cleanup ([#5972](https://github.com/NousResearch/hermes-agent/pull/5972))

+- **Thread-safe PairingStore** with atomic writes — @CharlieKerfoot ([#5656](https://github.com/NousResearch/hermes-agent/pull/5656))

+- **Sanitize media URLs** in base platform logs — @WAXLYY ([#5631](https://github.com/NousResearch/hermes-agent/pull/5631))

+- **Reduce Telegram fallback IP activation log noise** — @MadKangYu ([#5615](https://github.com/NousResearch/hermes-agent/pull/5615))

+- **Cron static method wrappers** to prevent self-binding ([#5299](https://github.com/NousResearch/hermes-agent/pull/5299))

+- **Stale 'hermes login' replaced** with 'hermes auth' + credential removal re-seeding fix ([#5670](https://github.com/NousResearch/hermes-agent/pull/5670))

+

+### Telegram

+- **Group topics skill binding** for supergroup forum topics ([#4886](https://github.com/NousResearch/hermes-agent/pull/4886))

+- **Emoji reactions** for approval status and notifications ([#5975](https://github.com/NousResearch/hermes-agent/pull/5975))

+- **Duplicate message delivery prevented** on send timeout ([#5153](https://github.com/NousResearch/hermes-agent/pull/5153))

+- **Command names sanitized** to strip invalid characters ([#5596](https://github.com/NousResearch/hermes-agent/pull/5596))

+- **Per-platform disabled skills** respected in Telegram menu and gateway dispatch ([#4799](https://github.com/NousResearch/hermes-agent/pull/4799))

+- **/approve and /deny** routed through running-agent guard ([#4798](https://github.com/NousResearch/hermes-agent/pull/4798))

+

+### Discord

+- **Channel controls** — ignored_channels and no_thread_channels config options ([#5975](https://github.com/NousResearch/hermes-agent/pull/5975))

+- **Skills registered as native slash commands** via shared gateway logic ([#5603](https://github.com/NousResearch/hermes-agent/pull/5603))

+- **/approve, /deny, /queue, /background, /btw** registered as native slash commands ([#4800](https://github.com/NousResearch/hermes-agent/pull/4800), [#5477](https://github.com/NousResearch/hermes-agent/pull/5477))

+- **Unnecessary members intent** removed on startup + token lock leak fix ([#5302](https://github.com/NousResearch/hermes-agent/pull/5302))

+

+### Slack

+- **Thread engagement** — auto-respond in bot-started and mentioned threads ([#5897](https://github.com/NousResearch/hermes-agent/pull/5897))

+- **mrkdwn in edit_message** + thread replies without @mentions ([#5733](https://github.com/NousResearch/hermes-agent/pull/5733))

+

+### Matrix

+- **Tier 1 feature parity** — reactions, read receipts, rich formatting, room management ([#5275](https://github.com/NousResearch/hermes-agent/pull/5275))

+- **MATRIX_REQUIRE_MENTION and MATRIX_AUTO_THREAD** support ([#5106](https://github.com/NousResearch/hermes-agent/pull/5106))

+- **Comprehensive reliability** — encrypted media, auth recovery, cron E2EE, Synapse compat ([#5271](https://github.com/NousResearch/hermes-agent/pull/5271))

+- **CJK input, E2EE, and reconnect** fixes ([#5665](https://github.com/NousResearch/hermes-agent/pull/5665))

+

+### Signal

+- **Full MEDIA: tag delivery** — send_image_file, send_voice, and send_video implemented ([#5602](https://github.com/NousResearch/hermes-agent/pull/5602))

+

+### Mattermost

+- **File attachments** — set message type to DOCUMENT when post has file attachments — @nericervin ([#5609](https://github.com/NousResearch/hermes-agent/pull/5609))

+

+### Feishu

+- **Interactive card approval buttons** ([#6043](https://github.com/NousResearch/hermes-agent/pull/6043))

+- **Reconnect and ACL** fixes ([#5665](https://github.com/NousResearch/hermes-agent/pull/5665))

+

+### Webhooks

+- **`{__raw__}` template token** and thread_id passthrough for forum topics ([#5662](https://github.com/NousResearch/hermes-agent/pull/5662))

+

+---

+

+## 🖥️ CLI & User Experience

+

+### Interactive CLI

+- **Defer response content** until reasoning block completes ([#5773](https://github.com/NousResearch/hermes-agent/pull/5773))

+- **Ghost status-bar lines cleared** on terminal resize ([#4960](https://github.com/NousResearch/hermes-agent/pull/4960))

+- **Normalise \r\n and \r line endings** in pasted text ([#4849](https://github.com/NousResearch/hermes-agent/pull/4849))

+- **ChatConsole errors, curses scroll, skin-aware banner, git state** banner fixes ([#5974](https://github.com/NousResearch/hermes-agent/pull/5974))

+- **Native Windows image paste** support ([#5917](https://github.com/NousResearch/hermes-agent/pull/5917))

+- **--yolo and other flags** no longer silently dropped when placed before 'chat' subcommand ([#5145](https://github.com/NousResearch/hermes-agent/pull/5145))

+

+### Setup & Configuration

+- **Config structure validation** — detect malformed YAML at startup with actionable error messages ([#5426](https://github.com/NousResearch/hermes-agent/pull/5426))

+- **Centralized logging** to `~/.hermes/logs/` — agent.log (INFO+), errors.log (WARNING+) with `hermes logs` command ([#5430](https://github.com/NousResearch/hermes-agent/pull/5430))

+- **Docs links added** to setup wizard sections ([#5283](https://github.com/NousResearch/hermes-agent/pull/5283))

+- **Doctor diagnostics** — sync provider checks, config migration, WAL and mem0 diagnostics ([#5077](https://github.com/NousResearch/hermes-agent/pull/5077))

+- **Timeout debug logging** and user-facing diagnostics improved ([#5370](https://github.com/NousResearch/hermes-agent/pull/5370))

+- **Reasoning effort unified** to config.yaml only ([#6118](https://github.com/NousResearch/hermes-agent/pull/6118))

+- **Permanent command allowlist** loaded on startup ([#5076](https://github.com/NousResearch/hermes-agent/pull/5076))

+- **`hermes auth remove`** now clears env-seeded credentials permanently ([#5285](https://github.com/NousResearch/hermes-agent/pull/5285))

+- **Bundled skills synced to all profiles** during update ([#5795](https://github.com/NousResearch/hermes-agent/pull/5795))

+- **`hermes update` no longer kills** freshly-restarted gateway service ([#5448](https://github.com/NousResearch/hermes-agent/pull/5448))

+- **Subprocess.run() timeouts** added to all gateway CLI commands ([#5424](https://github.com/NousResearch/hermes-agent/pull/5424))

+- **Actionable error message** when Codex refresh token is reused — @tymrtn ([#5612](https://github.com/NousResearch/hermes-agent/pull/5612))

+- **Google-workspace skill scripts** can now run directly — @xinbenlv ([#5624](https://github.com/NousResearch/hermes-agent/pull/5624))

+

+### Cron System

+- **Inactivity-based cron timeout** — replaces wall-clock; active tasks run indefinitely ([#5440](https://github.com/NousResearch/hermes-agent/pull/5440))

+- **Pre-run script injection** for data collection and change detection ([#5082](https://github.com/NousResearch/hermes-agent/pull/5082))

+- **Delivery failure tracking** in job status ([#6042](https://github.com/NousResearch/hermes-agent/pull/6042))

+- **Delivery guidance** in cron prompts — stops send_message thrashing ([#5444](https://github.com/NousResearch/hermes-agent/pull/5444))

+- **MEDIA files delivered** as native platform attachments ([#5921](https://github.com/NousResearch/hermes-agent/pull/5921))

+- **[SILENT] suppression** works anywhere in response — @auspic7 ([#5654](https://github.com/NousResearch/hermes-agent/pull/5654))

+- **Cron path traversal** hardening ([#5147](https://github.com/NousResearch/hermes-agent/pull/5147))

+

+---

+

+## 🔧 Tool System

+

+### Terminal & Execution

+- **Execute_code on remote backends** — code execution now works on Docker, SSH, Modal, and other remote terminal backends ([#5088](https://github.com/NousResearch/hermes-agent/pull/5088))

+- **Exit code context** for common CLI tools in terminal results — helps agent understand what went wrong ([#5144](https://github.com/NousResearch/hermes-agent/pull/5144))

+- **Progressive subdirectory hint discovery** — agent learns project structure as it navigates ([#5291](https://github.com/NousResearch/hermes-agent/pull/5291))

+- **notify_on_complete for background processes** — get notified when long-running tasks finish ([#5779](https://github.com/NousResearch/hermes-agent/pull/5779))

+- **Docker env config** — explicit container environment variables via docker_env config ([#4738](https://github.com/NousResearch/hermes-agent/pull/4738))

+- **Approval metadata included** in terminal tool results ([#5141](https://github.com/NousResearch/hermes-agent/pull/5141))

+- **Workdir parameter sanitized** in terminal tool across all backends ([#5629](https://github.com/NousResearch/hermes-agent/pull/5629))

+- **Detached process crash recovery** state corrected ([#6101](https://github.com/NousResearch/hermes-agent/pull/6101))

+- **Agent-browser paths with spaces** preserved — @Vasanthdev2004 ([#6077](https://github.com/NousResearch/hermes-agent/pull/6077))

+- **Portable base64 encoding** for image reading on macOS — @CharlieKerfoot ([#5657](https://github.com/NousResearch/hermes-agent/pull/5657))

+

+### Browser

+- **Switch managed browser provider** from Browserbase to Browser Use — @benbarclay ([#5750](https://github.com/NousResearch/hermes-agent/pull/5750))

+- **Firecrawl cloud browser** provider — @alt-glitch ([#5628](https://github.com/NousResearch/hermes-agent/pull/5628))

+- **JS evaluation** via browser_console expression parameter ([#5303](https://github.com/NousResearch/hermes-agent/pull/5303))

+- **Windows browser** fixes ([#5665](https://github.com/NousResearch/hermes-agent/pull/5665))

+

+### MCP

+- **MCP OAuth 2.1 PKCE** — full standards-compliant OAuth client support ([#5420](https://github.com/NousResearch/hermes-agent/pull/5420))

+- **OSV malware check** for MCP extension packages ([#5305](https://github.com/NousResearch/hermes-agent/pull/5305))

+- **Prefer structuredContent over text** + no_mcp sentinel ([#5979](https://github.com/NousResearch/hermes-agent/pull/5979))

+- **Unknown toolsets warning suppressed** for MCP server names ([#5279](https://github.com/NousResearch/hermes-agent/pull/5279))

+

+### Web & Files

+- **.zip document support** + auto-mount cache dirs into remote backends ([#4846](https://github.com/NousResearch/hermes-agent/pull/4846))

+- **Redact query secrets** in send_message errors — @WAXLYY ([#5650](https://github.com/NousResearch/hermes-agent/pull/5650))

+

+### Delegation

+- **Credential pool sharing** + workspace path hints for subagents ([#5748](https://github.com/NousResearch/hermes-agent/pull/5748))

+

+### ACP (VS Code / Zed / JetBrains)

+- **Aggregate ACP improvements** — auth compat, protocol fixes, command ads, delegation, SSE events ([#5292](https://github.com/NousResearch/hermes-agent/pull/5292))

+

+---

+

+## 🧩 Skills Ecosystem

+

+### Skills System

+- **Skill config interface** — skills can declare required config.yaml settings, prompted during setup, injected at load time ([#5635](https://github.com/NousResearch/hermes-agent/pull/5635))

+- **Plugin CLI registration system** — plugins register their own CLI subcommands without touching main.py ([#5295](https://github.com/NousResearch/hermes-agent/pull/5295))

+- **Request-scoped API hooks** with tool call correlation IDs for plugins ([#5427](https://github.com/NousResearch/hermes-agent/pull/5427))

+- **Session lifecycle hooks** — on_session_finalize and on_session_reset for CLI + gateway ([#6129](https://github.com/NousResearch/hermes-agent/pull/6129))

+- **Prompt for required env vars** during plugin install — @kshitijk4poor ([#5470](https://github.com/NousResearch/hermes-agent/pull/5470))

+- **Plugin name validation** — reject names that resolve to plugins root ([#5368](https://github.com/NousResearch/hermes-agent/pull/5368))

+- **pre_llm_call plugin context** moved to user message to preserve prompt cache ([#5146](https://github.com/NousResearch/hermes-agent/pull/5146))

+

+### New & Updated Skills

+- **popular-web-designs** — 54 production website design systems ([#5194](https://github.com/NousResearch/hermes-agent/pull/5194))

+- **p5js creative coding** — @SHL0MS ([#5600](https://github.com/NousResearch/hermes-agent/pull/5600))

+- **manim-video** — mathematical and technical animations — @SHL0MS ([#4930](https://github.com/NousResearch/hermes-agent/pull/4930))

+- **llm-wiki** — Karpathy's LLM Wiki skill ([#5635](https://github.com/NousResearch/hermes-agent/pull/5635))

+- **gitnexus-explorer** — codebase indexing and knowledge serving ([#5208](https://github.com/NousResearch/hermes-agent/pull/5208))

+- **research-paper-writing** — AI-Scientist & GPT-Researcher patterns — @SHL0MS ([#5421](https://github.com/NousResearch/hermes-agent/pull/5421))

+- **blogwatcher** updated to JulienTant's fork ([#5759](https://github.com/NousResearch/hermes-agent/pull/5759))

+- **claude-code skill** comprehensive rewrite v2.0 + v2.2 ([#5155](https://github.com/NousResearch/hermes-agent/pull/5155), [#5158](https://github.com/NousResearch/hermes-agent/pull/5158))

+- **Code verification skills** consolidated into one ([#4854](https://github.com/NousResearch/hermes-agent/pull/4854))

+- **Manim CE reference docs** expanded — geometry, animations, LaTeX — @leotrs ([#5791](https://github.com/NousResearch/hermes-agent/pull/5791))

+- **Manim-video references** — design thinking, updaters, paper explainer, decorations, production quality — @SHL0MS ([#5588](https://github.com/NousResearch/hermes-agent/pull/5588), [#5408](https://github.com/NousResearch/hermes-agent/pull/5408))

+

+---

+

+## 🔒 Security & Reliability

+

+### Security Hardening

+- **Consolidated security** — SSRF protections, timing attack mitigations, tar traversal prevention, credential leakage guards ([#5944](https://github.com/NousResearch/hermes-agent/pull/5944))

+- **Cross-session isolation** + cron path traversal hardening ([#5613](https://github.com/NousResearch/hermes-agent/pull/5613))

+- **Workdir parameter sanitized** in terminal tool across all backends ([#5629](https://github.com/NousResearch/hermes-agent/pull/5629))

+- **Approval 'once' session escalation** prevented + cron delivery platform validation ([#5280](https://github.com/NousResearch/hermes-agent/pull/5280))

+- **Profile-scoped Google Workspace OAuth tokens** protected ([#4910](https://github.com/NousResearch/hermes-agent/pull/4910))

+

+### Reliability

+- **Aggressive worktree and branch cleanup** to prevent accumulation ([#6134](https://github.com/NousResearch/hermes-agent/pull/6134))

+- **O(n²) catastrophic backtracking** in redact regex fixed — 100x improvement on large outputs ([#4962](https://github.com/NousResearch/hermes-agent/pull/4962))

+- **Runtime stability fixes** across core, web, delegate, and browser tools ([#4843](https://github.com/NousResearch/hermes-agent/pull/4843))

+- **API server streaming fix** + conversation history support ([#5977](https://github.com/NousResearch/hermes-agent/pull/5977))

+- **OpenViking API endpoint paths** and response parsing corrected ([#5078](https://github.com/NousResearch/hermes-agent/pull/5078))

+

+---

+

+## 🐛 Notable Bug Fixes

+

+- **9 community bugfixes salvaged** — gateway, cron, deps, macOS launchd in one batch ([#5288](https://github.com/NousResearch/hermes-agent/pull/5288))

+- **Batch core bug fixes** — model config, session reset, alias fallback, launchctl, delegation, atomic writes ([#5630](https://github.com/NousResearch/hermes-agent/pull/5630))

+- **Batch gateway/platform fixes** — matrix E2EE, CJK input, Windows browser, Feishu reconnect + ACL ([#5665](https://github.com/NousResearch/hermes-agent/pull/5665))

+- **Stale test skips removed**, regex backtracking, file search bug, and test flakiness ([#4969](https://github.com/NousResearch/hermes-agent/pull/4969))

+- **Nix flake** — read version, regen uv.lock, add hermes_logging — @alt-glitch ([#5651](https://github.com/NousResearch/hermes-agent/pull/5651))

+- **Lowercase variable redaction** regression tests ([#5185](https://github.com/NousResearch/hermes-agent/pull/5185))

+

+---

+

+## 🧪 Testing

+

+- **57 failing CI tests repaired** across 14 files ([#5823](https://github.com/NousResearch/hermes-agent/pull/5823))

+- **Test suite re-architecture** + CI failure fixes — @alt-glitch ([#5946](https://github.com/NousResearch/hermes-agent/pull/5946))

+- **Codebase-wide lint cleanup** — unused imports, dead code, and inefficient patterns ([#5821](https://github.com/NousResearch/hermes-agent/pull/5821))

+- **browser_close tool removed** — auto-cleanup handles it ([#5792](https://github.com/NousResearch/hermes-agent/pull/5792))

+

+---

+

+## 📚 Documentation

+

+- **Comprehensive documentation audit** — fix stale info, expand thin pages, add depth ([#5393](https://github.com/NousResearch/hermes-agent/pull/5393))

+- **40+ discrepancies fixed** between documentation and codebase ([#5818](https://github.com/NousResearch/hermes-agent/pull/5818))

+- **13 features documented** from last week's PRs ([#5815](https://github.com/NousResearch/hermes-agent/pull/5815))

+- **Guides section overhaul** — fix existing + add 3 new tutorials ([#5735](https://github.com/NousResearch/hermes-agent/pull/5735))

+- **Salvaged 4 docs PRs** — docker setup, post-update validation, local LLM guide, signal-cli install ([#5727](https://github.com/NousResearch/hermes-agent/pull/5727))

+- **Discord configuration reference** ([#5386](https://github.com/NousResearch/hermes-agent/pull/5386))

+- **Community FAQ entries** for common workflows and troubleshooting ([#4797](https://github.com/NousResearch/hermes-agent/pull/4797))

+- **WSL2 networking guide** for local model servers ([#5616](https://github.com/NousResearch/hermes-agent/pull/5616))

+- **Honcho CLI reference** + plugin CLI registration docs ([#5308](https://github.com/NousResearch/hermes-agent/pull/5308))

+- **Obsidian Headless setup** for servers in llm-wiki ([#5660](https://github.com/NousResearch/hermes-agent/pull/5660))

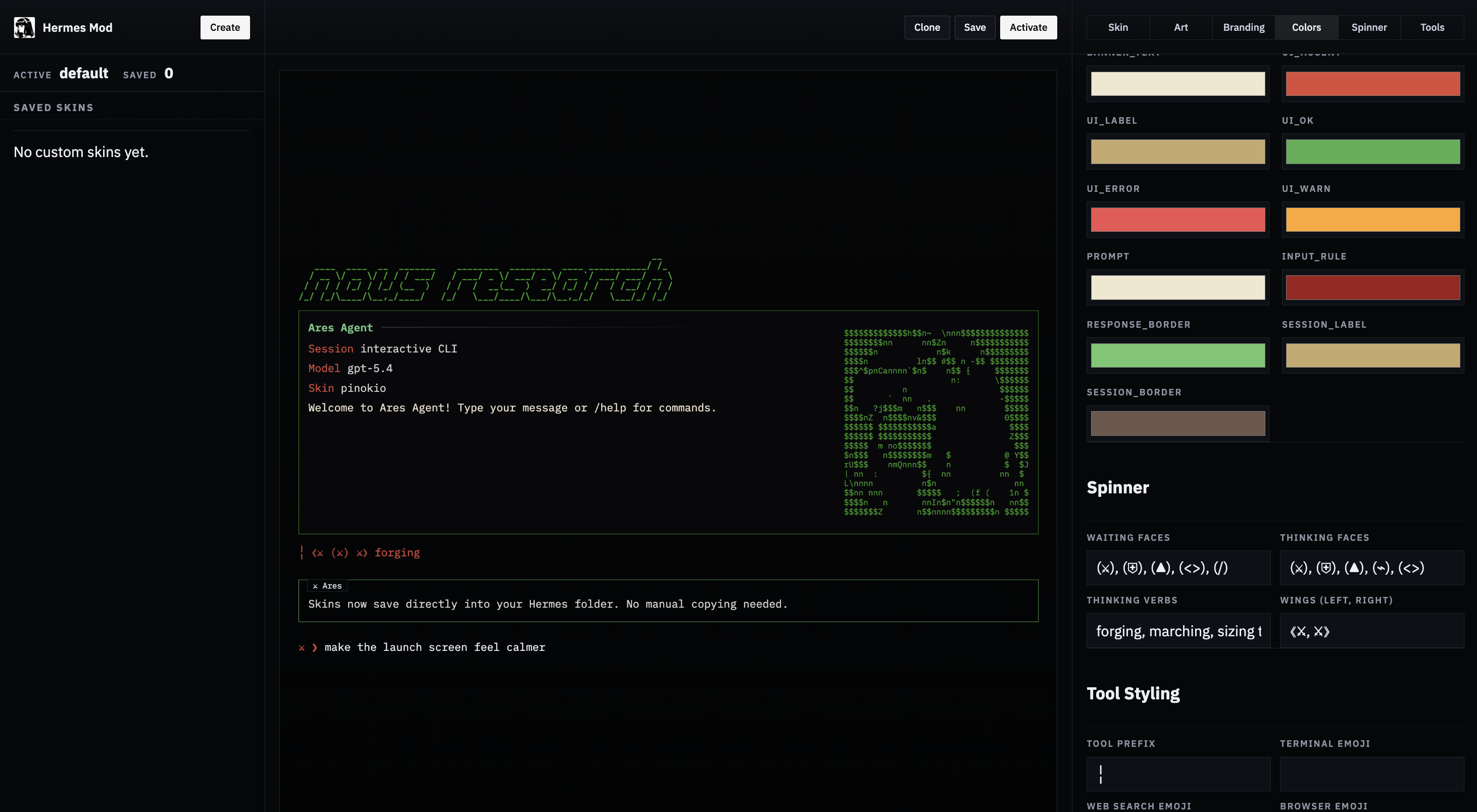

+- **Hermes Mod visual skin editor** added to skins page ([#6095](https://github.com/NousResearch/hermes-agent/pull/6095))

+

+---

+

+## 👥 Contributors

+

+### Core

+- **@teknium1** — 179 PRs

+

+### Top Community Contributors

+- **@SHL0MS** (7 PRs) — p5js creative coding skill, manim-video skill + 5 reference expansions, research-paper-writing, Nous OAuth fix, manim font fix

+- **@alt-glitch** (3 PRs) — Firecrawl cloud browser provider, test re-architecture + CI fixes, Nix flake fixes

+- **@benbarclay** (2 PRs) — Browser Use managed provider switch, Nous portal base URL fix

+- **@CharlieKerfoot** (2 PRs) — macOS portable base64 encoding, thread-safe PairingStore

+- **@WAXLYY** (2 PRs) — send_message secret redaction, gateway media URL sanitization

+- **@MadKangYu** (2 PRs) — Telegram log noise reduction, context compaction fix for temperature-restricted models

+

+### All Contributors

+@alt-glitch, @austinpickett, @auspic7, @benbarclay, @CharlieKerfoot, @GratefulDave, @kshitijk4poor, @leotrs, @lumethegreat, @MadKangYu, @nericervin, @ryanautomated, @SHL0MS, @techguysimon, @tymrtn, @Vasanthdev2004, @WAXLYY, @xinbenlv

+

+---

+

+**Full Changelog**: [v2026.4.3...v2026.4.8](https://github.com/NousResearch/hermes-agent/compare/v2026.4.3...v2026.4.8)

diff --git a/agent/anthropic_adapter.py b/agent/anthropic_adapter.py

index f4e8dcee65..2d6c2dd82e 100644

--- a/agent/anthropic_adapter.py

+++ b/agent/anthropic_adapter.py

@@ -1102,7 +1102,15 @@ def convert_messages_to_anthropic(

curr_content = [{"type": "text", "text": curr_content}]

fixed[-1]["content"] = prev_content + curr_content

else:

- # Consecutive assistant messages — merge text content

+ # Consecutive assistant messages — merge text content.

+ # Drop thinking blocks from the *second* message: their

+ # signature was computed against a different turn boundary

+ # and becomes invalid once merged.

+ if isinstance(m["content"], list):

+ m["content"] = [

+ b for b in m["content"]

+ if not (isinstance(b, dict) and b.get("type") in ("thinking", "redacted_thinking"))

+ ]

prev_blocks = fixed[-1]["content"]

curr_blocks = m["content"]

if isinstance(prev_blocks, list) and isinstance(curr_blocks, list):

@@ -1120,6 +1128,68 @@ def convert_messages_to_anthropic(

fixed.append(m)

result = fixed

+ # ── Thinking block signature management ──────────────────────────

+ # Anthropic signs thinking blocks against the full turn content.

+ # Any upstream mutation (context compression, session truncation,

+ # orphan stripping, message merging) invalidates the signature,

+ # causing HTTP 400 "Invalid signature in thinking block".

+ #

+ # Strategy (following clawdbot/OpenClaw pattern):

+ # 1. Strip thinking/redacted_thinking from all assistant messages

+ # EXCEPT the last one — preserves reasoning continuity on the

+ # current tool-use chain while avoiding stale signature errors.

+ # 2. Downgrade unsigned thinking blocks (no signature) to text —

+ # Anthropic can't validate them and will reject them.

+ # 3. Strip cache_control from thinking/redacted_thinking blocks —

+ # cache markers can interfere with signature validation.

+ _THINKING_TYPES = frozenset(("thinking", "redacted_thinking"))

+

+ last_assistant_idx = None

+ for i in range(len(result) - 1, -1, -1):

+ if result[i].get("role") == "assistant":

+ last_assistant_idx = i

+ break

+

+ for idx, m in enumerate(result):

+ if m.get("role") != "assistant" or not isinstance(m.get("content"), list):

+ continue

+

+ if idx != last_assistant_idx:

+ # Strip ALL thinking blocks from non-latest assistant messages

+ stripped = [

+ b for b in m["content"]

+ if not (isinstance(b, dict) and b.get("type") in _THINKING_TYPES)

+ ]

+ m["content"] = stripped or [{"type": "text", "text": "(thinking elided)"}]

+ else:

+ # Latest assistant: keep signed thinking blocks for reasoning

+ # continuity; downgrade unsigned ones to plain text.

+ new_content = []

+ for b in m["content"]:

+ if not isinstance(b, dict) or b.get("type") not in _THINKING_TYPES:

+ new_content.append(b)

+ continue

+ if b.get("type") == "redacted_thinking":

+ # Redacted blocks use 'data' for the signature payload

+ if b.get("data"):

+ new_content.append(b)

+ # else: drop — no data means it can't be validated

+ elif b.get("signature"):

+ # Signed thinking block — keep it

+ new_content.append(b)

+ else:

+ # Unsigned thinking — downgrade to text so it's not lost

+ thinking_text = b.get("thinking", "")

+ if thinking_text:

+ new_content.append({"type": "text", "text": thinking_text})

+ m["content"] = new_content or [{"type": "text", "text": "(empty)"}]

+

+ # Strip cache_control from any remaining thinking/redacted_thinking

+ # blocks — cache markers interfere with signature validation.

+ for b in m["content"]:

+ if isinstance(b, dict) and b.get("type") in _THINKING_TYPES:

+ b.pop("cache_control", None)

+

return system, result

@@ -1224,9 +1294,9 @@ def build_anthropic_kwargs(

# Map reasoning_config to Anthropic's thinking parameter.

# Claude 4.6 models use adaptive thinking + output_config.effort.

# Older models use manual thinking with budget_tokens.

- # Haiku models do NOT support extended thinking at all — skip entirely.

+ # Haiku and MiniMax models do NOT support extended thinking — skip entirely.

if reasoning_config and isinstance(reasoning_config, dict):

- if reasoning_config.get("enabled") is not False and "haiku" not in model.lower():

+ if reasoning_config.get("enabled") is not False and "haiku" not in model.lower() and "minimax" not in model.lower():

effort = str(reasoning_config.get("effort", "medium")).lower()

budget = THINKING_BUDGET.get(effort, 8000)

if _supports_adaptive_thinking(model):

diff --git a/agent/auxiliary_client.py b/agent/auxiliary_client.py

index 49a78458d3..2b99ac0708 100644

--- a/agent/auxiliary_client.py

+++ b/agent/auxiliary_client.py

@@ -59,13 +59,48 @@ from hermes_constants import OPENROUTER_BASE_URL

logger = logging.getLogger(__name__)

+_PROVIDER_ALIASES = {

+ "google": "gemini",

+ "google-gemini": "gemini",

+ "google-ai-studio": "gemini",

+ "glm": "zai",

+ "z-ai": "zai",

+ "z.ai": "zai",

+ "zhipu": "zai",

+ "kimi": "kimi-coding",

+ "moonshot": "kimi-coding",

+ "minimax-china": "minimax-cn",

+ "minimax_cn": "minimax-cn",

+ "claude": "anthropic",

+ "claude-code": "anthropic",

+}

+

+

+def _normalize_aux_provider(provider: Optional[str], *, for_vision: bool = False) -> str:

+ normalized = (provider or "auto").strip().lower()

+ if normalized.startswith("custom:"):

+ suffix = normalized.split(":", 1)[1].strip()

+ if not suffix:

+ return "custom"

+ normalized = suffix if not for_vision else "custom"

+ if normalized == "codex":

+ return "openai-codex"

+ if normalized == "main":

+ # Resolve to the user's actual main provider so named custom providers

+ # and non-aggregator providers (DeepSeek, Alibaba, etc.) work correctly.

+ main_prov = _read_main_provider()

+ if main_prov and main_prov not in ("auto", "main", ""):

+ return main_prov

+ return "custom"

+ return _PROVIDER_ALIASES.get(normalized, normalized)

+

# Default auxiliary models for direct API-key providers (cheap/fast for side tasks)

_API_KEY_PROVIDER_AUX_MODELS: Dict[str, str] = {

"gemini": "gemini-3-flash-preview",

"zai": "glm-4.5-flash",

"kimi-coding": "kimi-k2-turbo-preview",

- "minimax": "MiniMax-M2.7-highspeed",

- "minimax-cn": "MiniMax-M2.7-highspeed",

+ "minimax": "MiniMax-M2.7",

+ "minimax-cn": "MiniMax-M2.7",

"anthropic": "claude-haiku-4-5-20251001",

"ai-gateway": "google/gemini-3-flash",

"opencode-zen": "gemini-3-flash",

@@ -92,6 +127,7 @@ auxiliary_is_nous: bool = False

_OPENROUTER_MODEL = "google/gemini-3-flash-preview"

_NOUS_MODEL = "google/gemini-3-flash-preview"

_NOUS_FREE_TIER_VISION_MODEL = "xiaomi/mimo-v2-omni"

+_NOUS_FREE_TIER_AUX_MODEL = "xiaomi/mimo-v2-pro"

_NOUS_DEFAULT_BASE_URL = "https://inference-api.nousresearch.com/v1"

_ANTHROPIC_DEFAULT_BASE_URL = "https://api.anthropic.com"

_AUTH_JSON_PATH = get_hermes_home() / "auth.json"

@@ -105,6 +141,23 @@ _CODEX_AUX_MODEL = "gpt-5.2-codex"

_CODEX_AUX_BASE_URL = "https://chatgpt.com/backend-api/codex"

+def _to_openai_base_url(base_url: str) -> str:

+ """Normalize an Anthropic-style base URL to OpenAI-compatible format.

+

+ Some providers (MiniMax, MiniMax-CN) expose an ``/anthropic`` endpoint for

+ the Anthropic Messages API and a separate ``/v1`` endpoint for OpenAI chat

+ completions. The auxiliary client uses the OpenAI SDK, so it must hit the

+ ``/v1`` surface. Passing the raw ``inference_base_url`` causes requests to

+ land on ``/anthropic/chat/completions`` — a 404.

+ """

+ url = str(base_url or "").strip().rstrip("/")

+ if url.endswith("/anthropic"):

+ rewritten = url[: -len("/anthropic")] + "/v1"

+ logger.debug("Auxiliary client: rewrote base URL %s → %s", url, rewritten)

+ return rewritten

+ return url

+

+

def _select_pool_entry(provider: str) -> Tuple[bool, Optional[Any]]:

"""Return (pool_exists_for_provider, selected_entry)."""

try:

@@ -634,7 +687,9 @@ def _resolve_api_key_provider() -> Tuple[Optional[OpenAI], Optional[str]]:

if not api_key:

continue

- base_url = _pool_runtime_base_url(entry, pconfig.inference_base_url) or pconfig.inference_base_url

+ base_url = _to_openai_base_url(

+ _pool_runtime_base_url(entry, pconfig.inference_base_url) or pconfig.inference_base_url

+ )

model = _API_KEY_PROVIDER_AUX_MODELS.get(provider_id, "default")

logger.debug("Auxiliary text client: %s (%s) via pool", pconfig.name, model)

extra = {}

@@ -651,7 +706,9 @@ def _resolve_api_key_provider() -> Tuple[Optional[OpenAI], Optional[str]]:

if not api_key:

continue

- base_url = str(creds.get("base_url", "")).strip().rstrip("/") or pconfig.inference_base_url

+ base_url = _to_openai_base_url(

+ str(creds.get("base_url", "")).strip().rstrip("/") or pconfig.inference_base_url

+ )

model = _API_KEY_PROVIDER_AUX_MODELS.get(provider_id, "default")

logger.debug("Auxiliary text client: %s (%s)", pconfig.name, model)

extra = {}

@@ -713,7 +770,7 @@ def _try_openrouter() -> Tuple[Optional[OpenAI], Optional[str]]:

default_headers=_OR_HEADERS), _OPENROUTER_MODEL

-def _try_nous() -> Tuple[Optional[OpenAI], Optional[str]]:

+def _try_nous(vision: bool = False) -> Tuple[Optional[OpenAI], Optional[str]]:

nous = _read_nous_auth()

if not nous:

return None, None

@@ -725,12 +782,13 @@ def _try_nous() -> Tuple[Optional[OpenAI], Optional[str]]:

else:

model = _NOUS_MODEL

# Free-tier users can't use paid auxiliary models — use the free

- # multimodal model instead so vision/browser-vision still works.

+ # models instead: mimo-v2-omni for vision, mimo-v2-pro for text tasks.

try:

from hermes_cli.models import check_nous_free_tier

if check_nous_free_tier():

- model = _NOUS_FREE_TIER_VISION_MODEL

- logger.debug("Free-tier Nous account — using %s for auxiliary/vision", model)

+ model = _NOUS_FREE_TIER_VISION_MODEL if vision else _NOUS_FREE_TIER_AUX_MODEL

+ logger.debug("Free-tier Nous account — using %s for auxiliary/%s",

+ model, "vision" if vision else "text")

except Exception:

pass

return (

@@ -776,7 +834,7 @@ def _read_main_provider() -> str:

if isinstance(model_cfg, dict):

provider = model_cfg.get("provider", "")

if isinstance(provider, str) and provider.strip():

- return provider.strip().lower()

+ return _normalize_aux_provider(provider)

except Exception:

pass

return ""

@@ -1138,17 +1196,7 @@ def resolve_provider_client(

(client, resolved_model) or (None, None) if auth is unavailable.

"""

# Normalise aliases

- provider = (provider or "auto").strip().lower()

- if provider == "codex":

- provider = "openai-codex"

- if provider == "main":

- # Resolve to the user's actual main provider so named custom providers

- # and non-aggregator providers (DeepSeek, Alibaba, etc.) work correctly.

- main_prov = _read_main_provider()

- if main_prov and main_prov not in ("auto", "main", ""):

- provider = main_prov

- else:

- provider = "custom"

+ provider = _normalize_aux_provider(provider)

# ── Auto: try all providers in priority order ────────────────────

if provider == "auto":

@@ -1298,7 +1346,9 @@ def resolve_provider_client(

provider, ", ".join(tried_sources))

return None, None

- base_url = str(creds.get("base_url", "")).strip().rstrip("/") or pconfig.inference_base_url

+ base_url = _to_openai_base_url(

+ str(creds.get("base_url", "")).strip().rstrip("/") or pconfig.inference_base_url

+ )

default_model = _API_KEY_PROVIDER_AUX_MODELS.get(provider, "")

final_model = model or default_model

@@ -1375,24 +1425,11 @@ def get_async_text_auxiliary_client(task: str = ""):

_VISION_AUTO_PROVIDER_ORDER = (

"openrouter",

"nous",

- "openai-codex",

- "anthropic",

- "custom",

)

def _normalize_vision_provider(provider: Optional[str]) -> str:

- provider = (provider or "auto").strip().lower()

- if provider == "codex":

- return "openai-codex"

- if provider == "main":

- # Resolve to actual main provider — named custom providers and

- # non-aggregator providers need to pass through as their real name.

- main_prov = _read_main_provider()

- if main_prov and main_prov not in ("auto", "main", ""):

- return main_prov

- return "custom"

- return provider

+ return _normalize_aux_provider(provider, for_vision=True)

def _resolve_strict_vision_backend(provider: str) -> Tuple[Optional[Any], Optional[str]]:

@@ -1400,7 +1437,7 @@ def _resolve_strict_vision_backend(provider: str) -> Tuple[Optional[Any], Option

if provider == "openrouter":

return _try_openrouter()

if provider == "nous":

- return _try_nous()

+ return _try_nous(vision=True)

if provider == "openai-codex":

return _try_codex()

if provider == "anthropic":

@@ -1433,17 +1470,20 @@ def _preferred_main_vision_provider() -> Optional[str]:

def get_available_vision_backends() -> List[str]:

"""Return the currently available vision backends in auto-selection order.

- This is the single source of truth for setup, tool gating, and runtime

- auto-routing of vision tasks. The selected main provider is preferred when

- it is also a known-good vision backend; otherwise Hermes falls back through

- the standard conservative order.

+ Order: OpenRouter → Nous → active provider. This is the single source

+ of truth for setup, tool gating, and runtime auto-routing of vision tasks.

"""

- ordered = list(_VISION_AUTO_PROVIDER_ORDER)

- preferred = _preferred_main_vision_provider()

- if preferred in ordered:

- ordered.remove(preferred)

- ordered.insert(0, preferred)

- return [provider for provider in ordered if _strict_vision_backend_available(provider)]

+ available = [p for p in _VISION_AUTO_PROVIDER_ORDER

+ if _strict_vision_backend_available(p)]

+ # Also check the user's active provider (may be DeepSeek, Alibaba, named

+ # custom, etc.) — resolve_provider_client handles all provider types.

+ main_provider = _read_main_provider()

+ if (main_provider and main_provider not in ("auto", "")

+ and main_provider not in available):

+ client, _ = resolve_provider_client(main_provider, _read_main_model())

+ if client is not None:

+ available.append(main_provider)

+ return available

def resolve_vision_provider_client(

@@ -1488,16 +1528,30 @@ def resolve_vision_provider_client(

return "custom", client, final_model

if requested == "auto":

- ordered = list(_VISION_AUTO_PROVIDER_ORDER)

- preferred = _preferred_main_vision_provider()

- if preferred in ordered:

- ordered.remove(preferred)

- ordered.insert(0, preferred)

-

- for candidate in ordered:

+ # Vision auto-detection order:

+ # 1. OpenRouter (known vision-capable default model)

+ # 2. Nous Portal (known vision-capable default model)

+ # 3. Active provider + model (user's main chat config)

+ # 4. Stop

+ for candidate in _VISION_AUTO_PROVIDER_ORDER:

sync_client, default_model = _resolve_strict_vision_backend(candidate)

if sync_client is not None:

return _finalize(candidate, sync_client, default_model)

+

+ # Fall back to the user's active provider + model.

+ main_provider = _read_main_provider()

+ main_model = _read_main_model()

+ if main_provider and main_provider not in ("auto", ""):

+ sync_client, resolved_model = resolve_provider_client(

+ main_provider, main_model)

+ if sync_client is not None:

+ logger.info(

+ "Vision auto-detect: using active provider %s (%s)",

+ main_provider, resolved_model or main_model,

+ )

+ return _finalize(

+ main_provider, sync_client, resolved_model or main_model)

+

logger.debug("Auxiliary vision client: none available")

return None, None, None

diff --git a/agent/model_metadata.py b/agent/model_metadata.py

index 50245a7c9c..0a22711865 100644

--- a/agent/model_metadata.py

+++ b/agent/model_metadata.py

@@ -113,8 +113,15 @@ DEFAULT_CONTEXT_LENGTHS = {

"llama": 131072,

# Qwen

"qwen": 131072,

- # MiniMax

- "minimax": 204800,

+ # MiniMax (lowercase — lookup lowercases model names at line 973)

+ "minimax-m1-256k": 1000000,

+ "minimax-m1-128k": 1000000,

+ "minimax-m1-80k": 1000000,

+ "minimax-m1-40k": 1000000,

+ "minimax-m1": 1000000,

+ "minimax-m2.5": 1048576,

+ "minimax-m2.7": 1048576,

+ "minimax": 1048576,

# GLM

"glm": 202752,

# Kimi

@@ -127,7 +134,7 @@ DEFAULT_CONTEXT_LENGTHS = {

"deepseek-ai/DeepSeek-V3.2": 65536,

"moonshotai/Kimi-K2.5": 262144,

"moonshotai/Kimi-K2-Thinking": 262144,

- "MiniMaxAI/MiniMax-M2.5": 204800,

+ "minimaxai/minimax-m2.5": 1048576,

"XiaomiMiMo/MiMo-V2-Flash": 32768,

"mimo-v2-pro": 1048576,

"mimo-v2-omni": 1048576,

@@ -611,6 +618,59 @@ def _model_id_matches(candidate_id: str, lookup_model: str) -> bool:

return False

+def query_ollama_num_ctx(model: str, base_url: str) -> Optional[int]:

+ """Query an Ollama server for the model's context length.

+

+ Returns the model's maximum context from GGUF metadata via ``/api/show``,

+ or the explicit ``num_ctx`` from the Modelfile if set. Returns None if

+ the server is unreachable or not Ollama.

+

+ This is the value that should be passed as ``num_ctx`` in Ollama chat

+ requests to override the default 2048.

+ """

+ import httpx

+

+ bare_model = _strip_provider_prefix(model)

+ server_url = base_url.rstrip("/")

+ if server_url.endswith("/v1"):

+ server_url = server_url[:-3]

+

+ try:

+ server_type = detect_local_server_type(base_url)

+ except Exception:

+ return None

+ if server_type != "ollama":

+ return None

+

+ try:

+ with httpx.Client(timeout=3.0) as client:

+ resp = client.post(f"{server_url}/api/show", json={"name": bare_model})

+ if resp.status_code != 200:

+ return None

+ data = resp.json()

+

+ # Prefer explicit num_ctx from Modelfile parameters (user override)

+ params = data.get("parameters", "")

+ if "num_ctx" in params:

+ for line in params.split("\n"):

+ if "num_ctx" in line:

+ parts = line.strip().split()

+ if len(parts) >= 2:

+ try:

+ return int(parts[-1])

+ except ValueError:

+ pass

+

+ # Fall back to GGUF model_info context_length (training max)

+ model_info = data.get("model_info", {})

+ for key, value in model_info.items():

+ if "context_length" in key and isinstance(value, (int, float)):

+ return int(value)

+ except Exception:

+ pass

+ return None

+

+

def _query_local_context_length(model: str, base_url: str) -> Optional[int]:

"""Query a local server for the model's context length."""

import httpx

diff --git a/agent/prompt_builder.py b/agent/prompt_builder.py

index df5532e125..b1b0891f59 100644

--- a/agent/prompt_builder.py

+++ b/agent/prompt_builder.py

@@ -204,6 +204,30 @@ OPENAI_MODEL_EXECUTION_GUIDANCE = (

"the result.\n"

"\n"

"\n"

+ "\n"

+ "NEVER answer these from memory or mental computation — ALWAYS use a tool:\n"

+ "- Arithmetic, math, calculations → use terminal or execute_code\n"

+ "- Hashes, encodings, checksums → use terminal (e.g. sha256sum, base64)\n"

+ "- Current time, date, timezone → use terminal (e.g. date)\n"

+ "- System state: OS, CPU, memory, disk, ports, processes → use terminal\n"

+ "- File contents, sizes, line counts → use read_file, search_files, or terminal\n"

+ "- Git history, branches, diffs → use terminal\n"

+ "- Current facts (weather, news, versions) → use web_search\n"

+ "Your memory and user profile describe the USER, not the system you are "

+ "running on. The execution environment may differ from what the user profile "

+ "says about their personal setup.\n"

+ "\n"

+ "\n"

+ "\n"

+ "When a question has an obvious default interpretation, act on it immediately "

+ "instead of asking for clarification. Examples:\n"

+ "- 'Is port 443 open?' → check THIS machine (don't ask 'open where?')\n"

+ "- 'What OS am I running?' → check the live system (don't use user profile)\n"

+ "- 'What time is it?' → run `date` (don't guess)\n"

+ "Only ask for clarification when the ambiguity genuinely changes what tool "

+ "you would call.\n"

+ "\n"

+ "\n"

"\n"

"- Before taking an action, check whether prerequisite discovery, lookup, or "

"context-gathering steps are needed.\n"

diff --git a/agent/retry_utils.py b/agent/retry_utils.py

new file mode 100644

index 0000000000..71d6963f7b

--- /dev/null

+++ b/agent/retry_utils.py

@@ -0,0 +1,57 @@

+"""Retry utilities — jittered backoff for decorrelated retries.

+

+Replaces fixed exponential backoff with jittered delays to prevent

+thundering-herd retry spikes when multiple sessions hit the same

+rate-limited provider concurrently.

+"""

+

+import random

+import threading

+import time

+

+# Monotonic counter for jitter seed uniqueness within the same process.

+# Protected by a lock to avoid race conditions in concurrent retry paths

+# (e.g. multiple gateway sessions retrying simultaneously).

+_jitter_counter = 0

+_jitter_lock = threading.Lock()

+

+

+def jittered_backoff(

+ attempt: int,

+ *,

+ base_delay: float = 5.0,

+ max_delay: float = 120.0,

+ jitter_ratio: float = 0.5,

+) -> float:

+ """Compute a jittered exponential backoff delay.

+

+ Args:

+ attempt: 1-based retry attempt number.

+ base_delay: Base delay in seconds for attempt 1.

+ max_delay: Maximum delay cap in seconds.

+ jitter_ratio: Fraction of computed delay to use as random jitter

+ range. 0.5 means jitter is uniform in [0, 0.5 * delay].

+

+ Returns:

+ Delay in seconds: min(base * 2^(attempt-1), max_delay) + jitter.

+

+ The jitter decorrelates concurrent retries so multiple sessions

+ hitting the same provider don't all retry at the same instant.

+ """

+ global _jitter_counter

+ with _jitter_lock:

+ _jitter_counter += 1

+ tick = _jitter_counter

+

+ exponent = max(0, attempt - 1)

+ if exponent >= 63 or base_delay <= 0:

+ delay = max_delay

+ else:

+ delay = min(base_delay * (2 ** exponent), max_delay)

+

+ # Seed from time + counter for decorrelation even with coarse clocks.

+ seed = (time.time_ns() ^ (tick * 0x9E3779B9)) & 0xFFFFFFFF

+ rng = random.Random(seed)

+ jitter = rng.uniform(0, jitter_ratio * delay)

+

+ return delay + jitter

diff --git a/cli.py b/cli.py

index b4358a163c..f00e6b7fea 100644

--- a/cli.py

+++ b/cli.py

@@ -612,6 +612,11 @@ def _run_cleanup():

pass

# Shut down memory provider (on_session_end + shutdown_all) at actual

# session boundary — NOT per-turn inside run_conversation().

+ try:

+ from hermes_cli.plugins import invoke_hook as _invoke_hook

+ _invoke_hook("on_session_finalize", session_id=_active_agent_ref.session_id if _active_agent_ref else None, platform="cli")

+ except Exception:

+ pass

try:

if _active_agent_ref and hasattr(_active_agent_ref, 'shutdown_memory_provider'):

_active_agent_ref.shutdown_memory_provider(

@@ -755,7 +760,10 @@ def _setup_worktree(repo_root: str = None) -> Optional[Dict[str, str]]:

def _cleanup_worktree(info: Dict[str, str] = None) -> None:

"""Remove a worktree and its branch on exit.

- If the worktree has uncommitted changes, warn and keep it.

+ Preserves the worktree only if it has unpushed commits (real work

+ that hasn't been pushed to any remote). Uncommitted changes alone

+ (untracked files, test artifacts) are not enough to keep it — agent

+ work lives in commits/PRs, not the working tree.

"""

global _active_worktree

info = info or _active_worktree

@@ -771,23 +779,27 @@ def _cleanup_worktree(info: Dict[str, str] = None) -> None:

if not Path(wt_path).exists():

return

- # Check for uncommitted changes

+ # Check for unpushed commits — commits reachable from HEAD but not

+ # from any remote branch. These represent real work the agent did

+ # but didn't push.

+ has_unpushed = False

try:

- status = subprocess.run(

- ["git", "status", "--porcelain"],

+ result = subprocess.run(

+ ["git", "log", "--oneline", "HEAD", "--not", "--remotes"],

capture_output=True, text=True, timeout=10, cwd=wt_path,

)

- has_changes = bool(status.stdout.strip())

+ has_unpushed = bool(result.stdout.strip())

except Exception:

- has_changes = True # Assume dirty on error — don't delete

+ has_unpushed = True # Assume unpushed on error — don't delete

- if has_changes:

- print(f"\n\033[33m⚠ Worktree has uncommitted changes, keeping: {wt_path}\033[0m")

- print(f" To clean up manually: git worktree remove {wt_path}")

+ if has_unpushed:

+ print(f"\n\033[33m⚠ Worktree has unpushed commits, keeping: {wt_path}\033[0m")

+ print(f" To clean up manually: git worktree remove --force {wt_path}")

_active_worktree = None

return

- # Remove worktree

+ # Remove worktree (even if working tree is dirty — uncommitted

+ # changes without unpushed commits are just artifacts)

try:

subprocess.run(

["git", "worktree", "remove", wt_path, "--force"],

@@ -796,7 +808,7 @@ def _cleanup_worktree(info: Dict[str, str] = None) -> None:

except Exception as e:

logger.debug("Failed to remove worktree: %s", e)

- # Delete the branch (only if it was never pushed / has no upstream)

+ # Delete the branch

try:

subprocess.run(

["git", "branch", "-D", branch],

@@ -810,19 +822,27 @@ def _cleanup_worktree(info: Dict[str, str] = None) -> None:

def _prune_stale_worktrees(repo_root: str, max_age_hours: int = 24) -> None:

- """Remove worktrees older than max_age_hours that have no uncommitted changes.

+ """Remove stale worktrees and orphaned branches on startup.

- Runs silently on startup to clean up after crashed/killed sessions.

+ Age-based tiers:

+ - Under max_age_hours (24h): skip — session may still be active.

+ - 24h–72h: remove if no unpushed commits.

+ - Over 72h: force remove regardless (nothing should sit this long).

+

+ Also prunes orphaned ``hermes/*`` and ``pr-*`` local branches that

+ have no corresponding worktree.

"""

import subprocess

import time

worktrees_dir = Path(repo_root) / ".worktrees"

if not worktrees_dir.exists():

+ _prune_orphaned_branches(repo_root)

return

now = time.time()

- cutoff = now - (max_age_hours * 3600)

+ soft_cutoff = now - (max_age_hours * 3600) # 24h default

+ hard_cutoff = now - (max_age_hours * 3 * 3600) # 72h default

for entry in worktrees_dir.iterdir():

if not entry.is_dir() or not entry.name.startswith("hermes-"):

@@ -831,21 +851,24 @@ def _prune_stale_worktrees(repo_root: str, max_age_hours: int = 24) -> None:

# Check age

try:

mtime = entry.stat().st_mtime

- if mtime > cutoff:

+ if mtime > soft_cutoff:

continue # Too recent — skip

except Exception:

continue

- # Check for uncommitted changes

- try:

- status = subprocess.run(

- ["git", "status", "--porcelain"],

- capture_output=True, text=True, timeout=5, cwd=str(entry),

- )

- if status.stdout.strip():

- continue # Has changes — skip

- except Exception:

- continue # Can't check — skip

+ force = mtime <= hard_cutoff # Over 72h — force remove

+

+ if not force:

+ # 24h–72h tier: only remove if no unpushed commits

+ try:

+ result = subprocess.run(

+ ["git", "log", "--oneline", "HEAD", "--not", "--remotes"],

+ capture_output=True, text=True, timeout=5, cwd=str(entry),

+ )

+ if result.stdout.strip():

+ continue # Has unpushed commits — skip

+ except Exception:

+ continue # Can't check — skip

# Safe to remove

try:

@@ -864,10 +887,81 @@ def _prune_stale_worktrees(repo_root: str, max_age_hours: int = 24) -> None:

["git", "branch", "-D", branch],

capture_output=True, text=True, timeout=10, cwd=repo_root,

)

- logger.debug("Pruned stale worktree: %s", entry.name)

+ logger.debug("Pruned stale worktree: %s (force=%s)", entry.name, force)

except Exception as e:

logger.debug("Failed to prune worktree %s: %s", entry.name, e)

+ _prune_orphaned_branches(repo_root)

+

+

+def _prune_orphaned_branches(repo_root: str) -> None:

+ """Delete local ``hermes/hermes-*`` and ``pr-*`` branches with no worktree.

+

+ These are auto-generated by ``hermes -w`` sessions and PR review

+ workflows respectively. Once their worktree is gone they serve no

+ purpose and just accumulate.

+ """

+ import subprocess

+

+ try:

+ result = subprocess.run(

+ ["git", "branch", "--format=%(refname:short)"],

+ capture_output=True, text=True, timeout=10, cwd=repo_root,

+ )

+ if result.returncode != 0:

+ return

+ all_branches = [b.strip() for b in result.stdout.strip().split("\n") if b.strip()]

+ except Exception:

+ return

+

+ # Collect branches that are actively checked out in a worktree

+ active_branches: set = set()

+ try:

+ wt_result = subprocess.run(

+ ["git", "worktree", "list", "--porcelain"],

+ capture_output=True, text=True, timeout=10, cwd=repo_root,

+ )

+ for line in wt_result.stdout.split("\n"):

+ if line.startswith("branch refs/heads/"):

+ active_branches.add(line.split("branch refs/heads/", 1)[-1].strip())

+ except Exception:

+ return # Can't determine active branches — bail

+

+ # Also protect the currently checked-out branch and main

+ try:

+ head_result = subprocess.run(

+ ["git", "branch", "--show-current"],

+ capture_output=True, text=True, timeout=5, cwd=repo_root,

+ )

+ current = head_result.stdout.strip()

+ if current:

+ active_branches.add(current)

+ except Exception:

+ pass

+ active_branches.add("main")

+

+ orphaned = [

+ b for b in all_branches

+ if b not in active_branches

+ and (b.startswith("hermes/hermes-") or b.startswith("pr-"))

+ ]

+

+ if not orphaned:

+ return

+

+ # Delete in batches

+ for i in range(0, len(orphaned), 50):

+ batch = orphaned[i:i + 50]

+ try:

+ subprocess.run(

+ ["git", "branch", "-D"] + batch,

+ capture_output=True, text=True, timeout=30, cwd=repo_root,

+ )

+ except Exception as e:

+ logger.debug("Failed to prune orphaned branches: %s", e)

+

+ logger.debug("Pruned %d orphaned branches", len(orphaned))

+

# ============================================================================

# ASCII Art & Branding

# ============================================================================

@@ -3314,6 +3408,22 @@ class HermesCLI:

flush_tool_summary()

print()

+ def _notify_session_boundary(self, event_type: str) -> None:

+ """Fire a session-boundary plugin hook (on_session_finalize or on_session_reset).

+

+ Non-blocking — errors are caught and logged. Safe to call from any

+ lifecycle point (shutdown, /new, /reset).

+ """

+ try:

+ from hermes_cli.plugins import invoke_hook as _invoke_hook

+ _invoke_hook(

+ event_type,

+ session_id=self.agent.session_id if self.agent else None,

+ platform=getattr(self, "platform", None) or "cli",

+ )

+ except Exception:

+ pass

+

def new_session(self, silent=False):

"""Start a fresh session with a new session ID and cleared agent state."""

if self.agent and self.conversation_history:

@@ -3321,6 +3431,10 @@ class HermesCLI:

self.agent.flush_memories(self.conversation_history)

except (Exception, KeyboardInterrupt):

pass

+ self._notify_session_boundary("on_session_finalize")

+ elif self.agent:

+ # First session or empty history — still finalize the old session

+ self._notify_session_boundary("on_session_finalize")

old_session_id = self.session_id

if self._session_db and old_session_id:

@@ -3365,6 +3479,7 @@ class HermesCLI:

)

except Exception:

pass

+ self._notify_session_boundary("on_session_reset")

if not silent:

print("(^_^)v New session started!")

diff --git a/cron/jobs.py b/cron/jobs.py

index 214da521fe..4096d1fd81 100644

--- a/cron/jobs.py

+++ b/cron/jobs.py

@@ -574,12 +574,16 @@ def remove_job(job_id: str) -> bool:

return False

-def mark_job_run(job_id: str, success: bool, error: Optional[str] = None):

+def mark_job_run(job_id: str, success: bool, error: Optional[str] = None,

+ delivery_error: Optional[str] = None):

"""

Mark a job as having been run.

Updates last_run_at, last_status, increments completed count,

computes next_run_at, and auto-deletes if repeat limit reached.

+

+ ``delivery_error`` is tracked separately from the agent error — a job

+ can succeed (agent produced output) but fail delivery (platform down).

"""

jobs = load_jobs()

for i, job in enumerate(jobs):

@@ -588,6 +592,8 @@ def mark_job_run(job_id: str, success: bool, error: Optional[str] = None):

job["last_run_at"] = now

job["last_status"] = "ok" if success else "error"

job["last_error"] = error if not success else None

+ # Track delivery failures separately — cleared on successful delivery

+ job["last_delivery_error"] = delivery_error

# Increment completed count

if job.get("repeat"):

diff --git a/cron/scheduler.py b/cron/scheduler.py

index 8d71248b4e..33a9b89935 100644

--- a/cron/scheduler.py

+++ b/cron/scheduler.py

@@ -196,7 +196,7 @@ def _send_media_via_adapter(adapter, chat_id: str, media_files: list, metadata:

logger.warning("Job '%s': failed to send media %s: %s", job.get("id", "?"), media_path, e)

-def _deliver_result(job: dict, content: str, adapters=None, loop=None) -> None:

+def _deliver_result(job: dict, content: str, adapters=None, loop=None) -> Optional[str]:

"""

Deliver job output to the configured target (origin chat, specific platform, etc.).

@@ -204,16 +204,16 @@ def _deliver_result(job: dict, content: str, adapters=None, loop=None) -> None:

use the live adapter first — this supports E2EE rooms (e.g. Matrix) where

the standalone HTTP path cannot encrypt. Falls back to standalone send if

the adapter path fails or is unavailable.

+

+ Returns None on success, or an error string on failure.

"""

target = _resolve_delivery_target(job)

if not target:

if job.get("deliver", "local") != "local":

- logger.warning(

- "Job '%s' deliver=%s but no concrete delivery target could be resolved",

- job["id"],

- job.get("deliver", "local"),

- )

- return

+ msg = f"no delivery target resolved for deliver={job.get('deliver', 'local')}"

+ logger.warning("Job '%s': %s", job["id"], msg)

+ return msg

+ return None # local-only jobs don't deliver — not a failure

platform_name = target["platform"]

chat_id = target["chat_id"]

@@ -239,19 +239,22 @@ def _deliver_result(job: dict, content: str, adapters=None, loop=None) -> None:

}

platform = platform_map.get(platform_name.lower())

if not platform:

- logger.warning("Job '%s': unknown platform '%s' for delivery", job["id"], platform_name)

- return

+ msg = f"unknown platform '{platform_name}'"

+ logger.warning("Job '%s': %s", job["id"], msg)

+ return msg

try:

config = load_gateway_config()

except Exception as e:

- logger.error("Job '%s': failed to load gateway config for delivery: %s", job["id"], e)

- return

+ msg = f"failed to load gateway config: {e}"

+ logger.error("Job '%s': %s", job["id"], msg)

+ return msg

pconfig = config.platforms.get(platform)

if not pconfig or not pconfig.enabled:

- logger.warning("Job '%s': platform '%s' not configured/enabled", job["id"], platform_name)

- return

+ msg = f"platform '{platform_name}' not configured/enabled"

+ logger.warning("Job '%s': %s", job["id"], msg)

+ return msg

# Optionally wrap the content with a header/footer so the user knows this

# is a cron delivery. Wrapping is on by default; set cron.wrap_response: false

@@ -307,7 +310,7 @@ def _deliver_result(job: dict, content: str, adapters=None, loop=None) -> None:

if adapter_ok:

logger.info("Job '%s': delivered to %s:%s via live adapter", job["id"], platform_name, chat_id)

- return

+ return None

except Exception as e:

logger.warning(

"Job '%s': live adapter delivery to %s:%s failed (%s), falling back to standalone",

@@ -329,13 +332,17 @@ def _deliver_result(job: dict, content: str, adapters=None, loop=None) -> None:

future = pool.submit(asyncio.run, _send_to_platform(platform, pconfig, chat_id, cleaned_delivery_content, thread_id=thread_id, media_files=media_files))

result = future.result(timeout=30)

except Exception as e:

- logger.error("Job '%s': delivery to %s:%s failed: %s", job["id"], platform_name, chat_id, e)

- return

+ msg = f"delivery to {platform_name}:{chat_id} failed: {e}"

+ logger.error("Job '%s': %s", job["id"], msg)

+ return msg

if result and result.get("error"):

- logger.error("Job '%s': delivery error: %s", job["id"], result["error"])

- else:

- logger.info("Job '%s': delivered to %s:%s", job["id"], platform_name, chat_id)

+ msg = f"delivery error: {result['error']}"

+ logger.error("Job '%s': %s", job["id"], msg)

+ return msg

+

+ logger.info("Job '%s': delivered to %s:%s", job["id"], platform_name, chat_id)

+ return None

_SCRIPT_TIMEOUT = 120 # seconds

@@ -578,11 +585,9 @@ def run_job(job: dict) -> tuple[bool, str, str, Optional[str]]:

except Exception as e:

logger.warning("Job '%s': failed to load config.yaml, using defaults: %s", job_id, e)

- # Reasoning config from env or config.yaml

+ # Reasoning config from config.yaml

from hermes_constants import parse_reasoning_effort

- effort = os.getenv("HERMES_REASONING_EFFORT", "")

- if not effort:

- effort = str(_cfg.get("agent", {}).get("reasoning_effort", "")).strip()

+ effort = str(_cfg.get("agent", {}).get("reasoning_effort", "")).strip()

reasoning_config = parse_reasoning_effort(effort)

# Prefill messages from env or config.yaml

@@ -868,13 +873,15 @@ def tick(verbose: bool = True, adapters=None, loop=None) -> int:

logger.info("Job '%s': agent returned %s — skipping delivery", job["id"], SILENT_MARKER)